Invited Keynote Speaker

Dr. Tianfan Xue is a researcher in the computational photography team in Google Research. He received his Ph.D. degree from the Computer Science and Artificial Intelligence Laboratory (CSAIL) of Massachusetts Institute of Technology in 2017. He also served as the web chair of CVPR 2020. His research focuses on increasing accessibility of computational photography networks to millions of users: making them fast, robust, and less data-hungry.

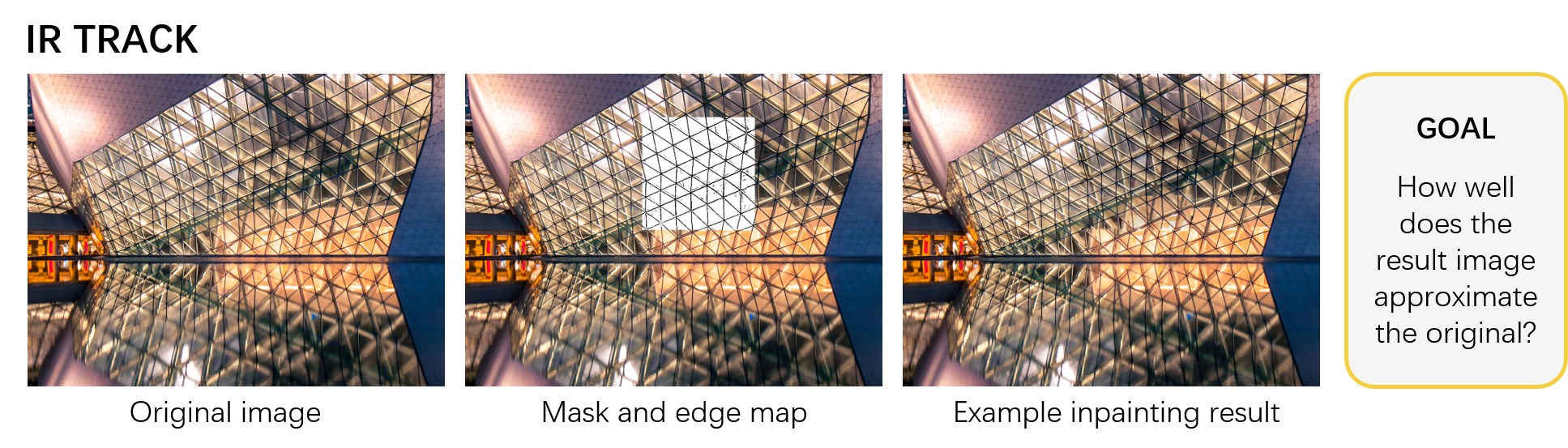

Why simulated data is important and how to use them in image processingDeep neural networks have demonstrated impressive performance in many tasks in computer vision, speech recognition, and natural language processing. One of the main reasons for its success is the usage of big training data with manual labels. However, for many image processing tasks, like denoising or reflection removal, it is often hard to get the ground truth output on a large dataset. Thus, researchers often face the dilemma: either use a small training set that consists of real-world input and ground truth output, or use a large simulated dataset that may be different from the real images.

In this talk, we will illustrate that, with a properly designed simulation pipeline, the networks trained on simulated data generalize well to real images. We will demonstrate the power of simulated data on 3 image processing tasks: dual-view reflection removal, raw image denoising, and flare removal. We will also discuss some design principles of how to build a better simulation pipeline.